AI in the Software Development Lifecycle

A practical approach to closing the workflow gap

Executive Summary

AI is already helping software teams move faster. Developers are using AI to generate and review code. Product teams are using it to draft requirements. QA teams are using it to accelerate test creation and automation.

However, many organizations are not seeing proportional improvements in delivery predictability, quality, or cycle time. The reason is simple: AI is often applied to individual tasks, while the larger software delivery workflow remains fragmented.

This paper is intended for technology, product, and delivery leaders who are seeing early AI productivity gains but are not yet seeing measurable improvements across the full software development lifecycle.

The real opportunity is not simply using AI to make each team faster. It uses AI to improve how work is defined, structured, built, and validated throughout the lifecycle. When organizations close the workflow gap, they reduce ambiguity, improve handoffs, shift validation earlier, and create a more predictable delivery model.

Software teams are moving faster, but delivery is not improving

AI is already reshaping how software is developed. Many organizations are applying it across different parts of the lifecycle, from generating code to drafting requirements and automating testing. These efforts are producing measurable gains within individual teams, particularly in speed and efficiency.

However, those gains are not consistently translating into better delivery outcomes.

Development cycles remain unpredictable. Rework continues to consume a significant portion of the team's capacity. Coordination across product, engineering, and QA still requires significant effort. In many cases, the increased speed of execution has made these issues more visible rather than resolving them.

For example, a development team may use AI-assisted coding to complete implementation faster, but if the original requirements are unclear, the team still needs clarification before work can move forward. Similarly, QA automation may accelerate test execution, but if acceptance criteria are incomplete, defects may still be discovered late in the process.

This disconnect highlights a broader issue. The challenge is not whether AI is effective at improving individual tasks. It is how those improvements fit into the broader system of software delivery.

Most organizations are applying AI to accelerate execution within specific stages. Far fewer are using it to improve how work is defined, structured, and transferred across the lifecycle. As a result, they see localized gains rather than meaningful improvements at the system level.

The workflow gap in modern SDLCs

The primary constraint in most software delivery environments is not development throughput. It is the structure and consistency of the workflow itself.

The workflow gap is the loss of clarity, context, and consistency that occurs as work moves from business intent to requirements, tickets, implementation, and validation.

This gap exists across nearly every SDLC, regardless of tooling, methodology, or organizational structure.

In a typical lifecycle, business needs are captured at a high level and translated into tickets or user stories. Development teams interpret those tickets and implement the work. QA validates the outcome, often after development is complete.

At each transition point, information is compressed, interpreted, or lost.

Requirements may not contain enough detail for implementation. Tickets vary in structure and completeness. Development teams compensate by making assumptions or seeking clarification midstream. QA often identifies issues that originate from earlier ambiguity rather than defects introduced during development.

These breakdowns create a consistent pattern of rework. Issues discovered later in the process require teams to revisit earlier decisions, re-align stakeholders, and adjust implementation. The cumulative effect is a delivery model that absorbs significant inefficiency without making it visible as a single problem.

Over time, the cost of this inefficiency becomes material. Teams are not limited by their ability to build software, but by the effort required to reconcile gaps in how work was initially defined and communicated.

Why AI alone does not solve the problem

AI is already improving many parts of the SDLC. Developers are able to generate and review code more quickly. Product teams can create more structured requirements. QA teams can automate aspects of testing that were previously manual.

These improvements are meaningful, but they are often implemented independently.

Each function applies AI to its own workflows without a consistent model for how outputs are consumed by the next stage. As a result, the quality and structure of work vary across the lifecycle, and the burden of alignment remains.

A common example illustrates this clearly.

A product team uses AI to generate detailed requirements based on business inputs. The output is more complete than before, but it is not aligned with how work is structured in the development system. When the work reaches engineering, teams still need to reinterpret and reformat it before they can begin implementation.

At the same time, developers are using AI to accelerate coding tasks, but they are doing so based on inputs that still lack consistency. QA continues to identify issues that originate from ambiguity in those inputs, even as testing becomes more automated.

In this scenario, every team is improving its own efficiency. However, the system as a whole remains constrained by the same friction points.

AI accelerates individual tasks, but without a consistent approach to how work is defined and transferred, it does not reduce the need for interpretation, clarification, or rework. The Workflow Gap remains intact.

Improving delivery starts with how work flows

Addressing this issue requires shifting focus from task to workflow optimization.

Rather than asking how AI can speed up individual steps, organizations need to examine how work flows through the lifecycle and where breakdowns occur. This includes how requirements are defined, how development work is structured, and how validation is performed.

Improving workflow means creating consistency at each stage and reducing the need for downstream interpretation.

Requirements need to be captured in a way that directly supports implementation. Tickets need to follow consistent patterns so that engineering and QA teams can rely on them without rework. Validation needs to begin earlier, when changes are less costly, and the context is still intact.

When these elements are aligned, the impact is cumulative.

Teams spend less time clarifying intent and more time executing. Fewer issues are introduced early in the process, which reduces the need for late-stage corrections. Coordination becomes more predictable, and delivery outcomes become more consistent.

This shift does not require a fundamental change in team structure or tooling. It requires a more deliberate approach to how work is defined, structured, and validated across the system.

A practical model for an AI-enabled SDLC

A useful way to operationalize this shift is to view the SDLC as a connected system with clearly defined stages and transitions.

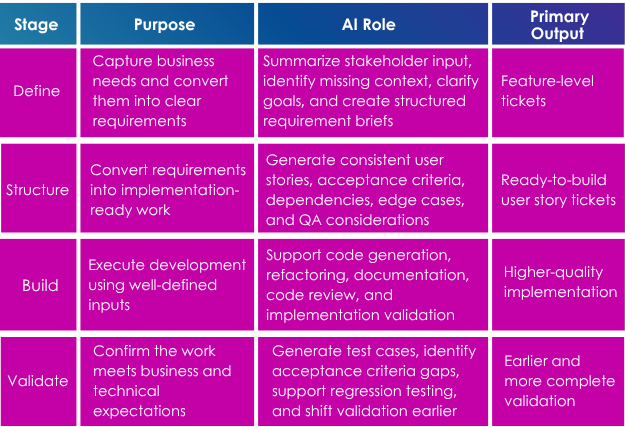

An AI-enabled SDLC can be structured around four stages:

Most organizations already operate within these stages, but the transitions between them are inconsistent. Each stage produces outputs that are interpreted differently by the next, creating variability and inefficiency.

AI improves each stage individually, but its broader impact comes from improving the consistency of these transitions. When outputs from one stage are directly usable by the next, the need for rework is reduced, and the overall system becomes more efficient.

Where AI delivers the greatest impact

While AI can be applied across the lifecycle, its value is highest in areas where ambiguity creates downstream inefficiencies.

In requirements and ticket creation, AI can transform unstructured inputs into structured outputs that are consistent and actionable. This reduces variability in how work is defined and improves alignment before development begins.

In development, AI supports both code generation and review. This reduces time spent on repetitive tasks and allows developers to focus on implementation decisions. When inputs are consistent, the effectiveness of these tools increases significantly.

In QA, AI enables earlier validation by generating test cases and identifying gaps in acceptance criteria during ticket creation. This shifts quality assurance upstream, reducing the cost of resolving issues.

When applied together, these capabilities reinforce each other. Clearer requirements lead to more consistent development, and earlier validation reduces the need for downstream correction.

AI-enabled SDLC maturity model

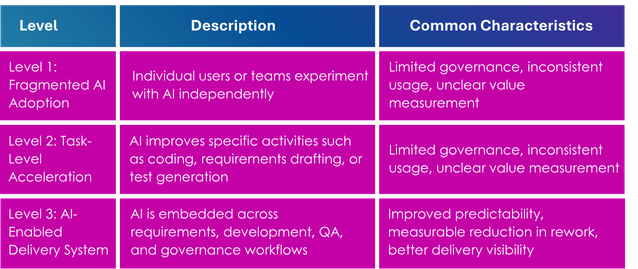

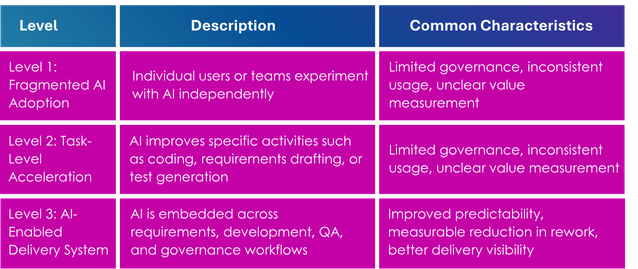

Organizations typically progress through several levels of maturity as they apply AI to the SDLC.

Most organizations begin at Level 1 or Level 2. These stages can create meaningful productivity improvements, but they often do not address the broader workflow issues that limit delivery outcomes.

The greatest value comes from moving toward Level 3, where AI not only helps individuals work faster but also improves how work moves through the system.

Measuring the impact

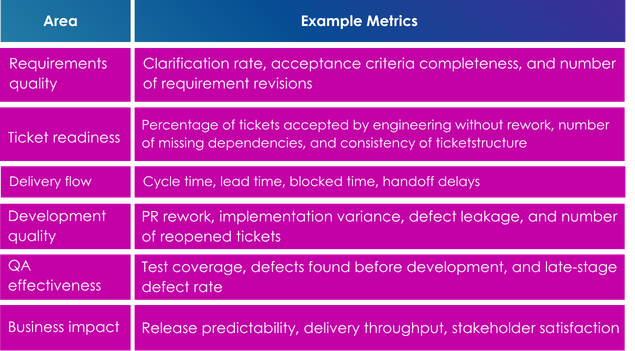

To determine whether AI is improving the software delivery system, organizations need to measure more than individual productivity.

The most useful metrics are those that reveal whether work is becoming clearer, more consistent, and easier to validate.

These metrics help distinguish between task-level acceleration and system-level improvement.

For example, an organization may see faster code generation but no reduction in cycle time. That indicates that the bottleneck may not be coding speed. It may be unclear requirements, inconsistent ticket quality, or late-stage validation.

By measuring the full workflow, organizations can identify where AI is creating meaningful impact and where additional alignment is needed.

What this looks like in practice

Organizations that reduce the Workflow Gap operate with greater consistency across the lifecycle.

Work begins with structured inputs that are directly aligned to how development teams operate. Tickets follow a consistent format, with clearly defined acceptance criteria that both engineering and QA teams can rely on.

Questions that would typically arise during development are addressed earlier, during the definition and structuring stages. This reduces interruptions during execution and allows teams to maintain momentum.

QA is integrated earlier in the process, ensuring that validation is based on clearly defined criteria rather than retrospective interpretation. AI is embedded into core systems such as Azure DevOps or Jira, enabling work to be created, refined, and validated within a shared environment.

The result is not simply faster development, but a more stable and predictable delivery model. Teams spend less time resolving ambiguity and more time delivering against clearly defined objectives.

Common pitfalls to avoid

Organizations attempting to apply AI to the SDLC often encounter similar challenges.

Focusing primarily on tools rather than workflows limits impact. Without addressing how work is defined and transferred, new tools do not resolve underlying inefficiencies.

Fragmented adoption across teams leads to inconsistent outputs. When each function applies AI differently, the variability between stages increases rather than decreases.

A lack of defined success criteria makes it difficult to measure progress. Without clear metrics, organizations struggle to determine whether improvements are occurring at the system level or only within individual tasks.

Another common pitfall is over-indexing on development productivity. Development remains an important part of the SDLC and should not be overlooked. AI-assisted development can create meaningful gains. However, if organizations focus solely on coding speed, they may miss larger inefficiencies upstream and downstream.

Avoiding these pitfalls requires alignment across teams, consistency in how AI is applied, and a focus on measurable outcomes.

When to act

A shift in approach is typically needed when improvements in execution are not reflected in delivery outcomes.

This may appear as faster development cycles that do not translate into shorter timelines, ongoing clarification between teams, or recurring issues identified late in the process. In some cases, AI adoption continues to expand without producing consistent improvements.

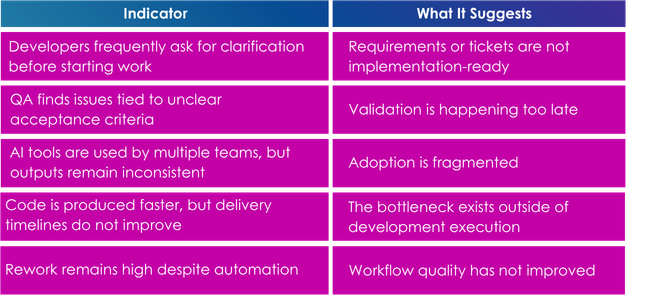

Common indicators include:

These patterns indicate that the constraint is not within individual tasks, but within how work flows across the lifecycle.

A practical next step

Improving the SDLC begins with understanding how work currently flows across the organization.

This includes identifying where work slows down, where information is lost, and where rework is introduced. Without this level of visibility, it is difficult to determine where AI can be applied effectively.

A practical starting point is an AI-enabled SDLC assessment focused on five areas:

From there, organizations can identify opportunities to improve consistency, reduce rework, and apply AI to deliver meaningful impact. The goal is not to introduce AI everywhere at once.

The goal is to apply AI where it improves the way the delivery system operates.

Closing the workflow gap is the real opportunity

AI has already demonstrated its ability to improve how individual tasks are performed. The next phase of value creation comes from improving how those tasks connect.

Organizations that focus solely on acceleration will continue to see incremental gains. Those who focus on improving how work flows through the SDLC will see greater consistency, efficiency, and predictability in delivery.

The advantage is not in using AI more broadly. It is in applying AI that it reduces ambiguity, improves handoffs, strengthens validation, and changes how the system operates.

For technology and delivery leaders, the next step is to assess where the Workflow Gap exists today and identify where AI can create measurable improvement across the full lifecycle.

That is where the real opportunity lies.

About XCentium

XCentium is an award-winning consultancy that helps global brands design, build, and optimize digital experiences. The company combines deep expertise across digital experience and commerce platforms, as well as AI, data, and cloud, with senior-led teams and a client-first approach to deliver measurable business outcomes. For more information, visit XCentium.com.