Multi-agent AI is having its moment.

CrewAI has 100,000+ certified developers. LangGraph surpassed ten’s of millions monthly downloads. Everyone from OpenAI to Google is shipping orchestration frameworks with the same pitch: define your agents, wire them together, let them collaborate.

We evaluated every major framework and chose a fundamentally different path.

What the Frameworks Do

They all solve the same core problem: how do you get multiple LLM calls to coordinate?

CrewAI gives you YAML-configured agents with roles, goals, and backstories arranged in chains or hierarchies. LangGraph gives you a directed graph—a state machine where each state is an LLM call. OpenAI’s Agents SDK uses handoffs: Agent A transfers control to Agent B with conversation context. Google’s ADK delegates through parent-child hierarchies. AutoGen puts agents in group chats.

Different patterns but the same underpinning philosophy: orchestration is the hard problem, and agent intelligence comes from the LLM’s general knowledge.

The 20-Agent Myth

You’ve seen the posts I’m sure. Marketing materials love big numbers. “Orchestrate 20, 30, even 50 agents!” Earlier this year Google Research measured what actually happens at scale and found through their tests that centralized systems (one orchestrator, many specialists) contained error amplifications up to 4.4x. Decentralized systems saw errors compound with every added agent.

The practical sweet spot is 3–10 specialists with an orchestrator.

When you see “20–30 agents” in the wild, it’s almost always one of three things:

A routing catalog. 20–30 definitions exist, but only 3–5 activate per request.

Simulations. Research experiments with LLM personas in virtual worlds. Interesting science, not production software.

Thin wrappers. Each “agent” is a system prompt and an API call. Functions with personality.

Real multi-agent production deployments at Klarna, Elastic, and Amazon use small, focused teams. The intelligence is in the design, not the headcount.

What’s Actually Missing

Here’s a QA agent in CrewAI:

qa_agent:

role: "QA Tester"

goal: "Find bugs in the code"

backstory: "You are an experienced QA engineer..."

That’s the entire definition. No testing rubric. No predefined sequence for unit tests, then integration, then E2E. No output template. No circuit breaker when acceptance criteria start failing. And in the case of UX tickets, no pixel-diff verification. No responsive viewport testing. No rule about fix cycles before escalation.

Frameworks give you coordination primitives. In plain English that means frameworks define who talks to whom, in what order. But they leave domain expertise to the LLM’s training data. Ask yourself how well an LLM, even a frontier one, knows your business. For generic work like summarizing documents or routing customer inquiries, that’s fine. For specialized work like reviewing a PR for security vulnerabilities, validating findings or filtering false positives, and routing confirmed issues back with line references it’s not fine at all. The training data of an LLM does not know your process.

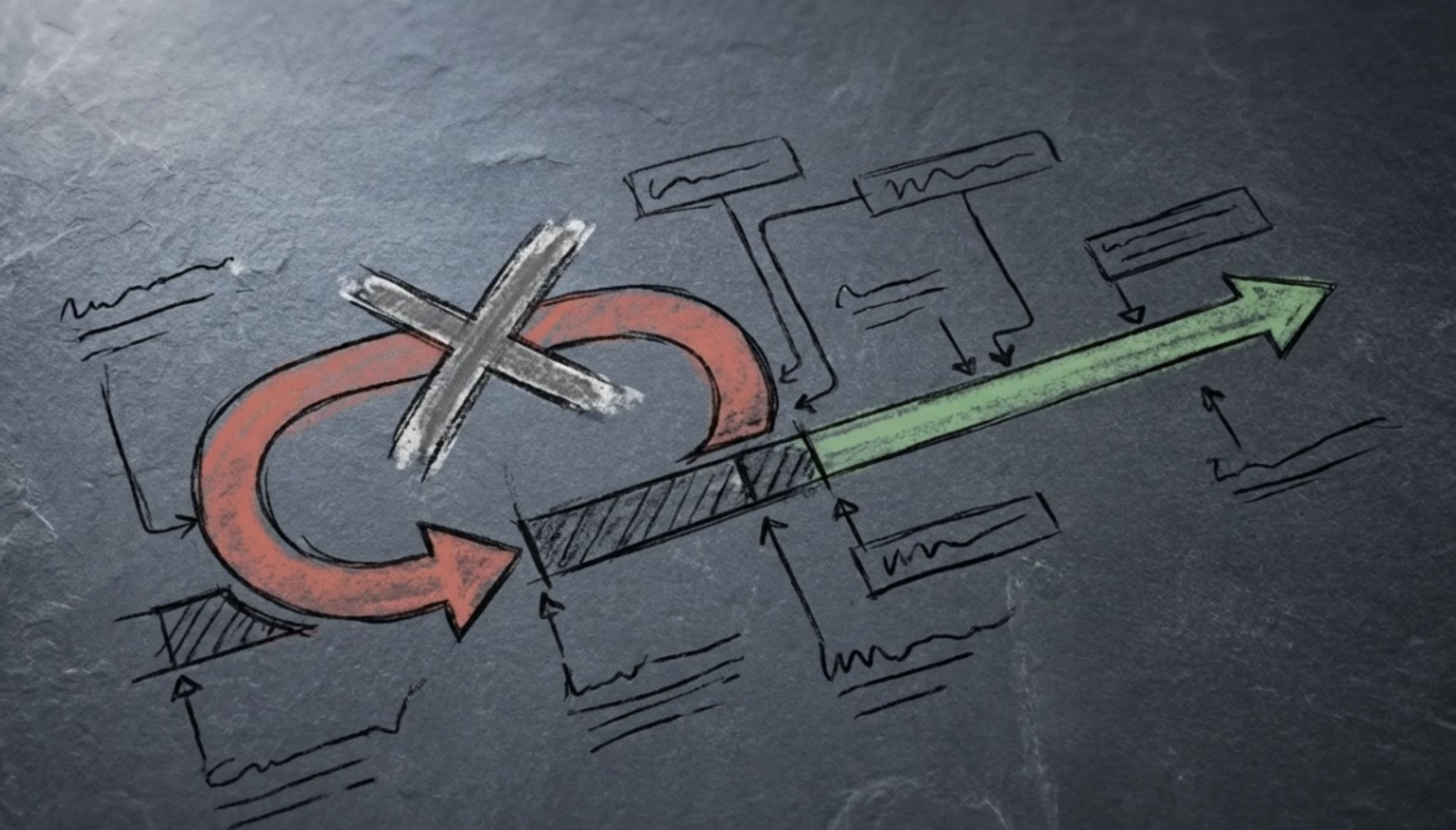

Knowledge-First vs. Orchestration-First

We took the opposite approach: deep agents with simple orchestration, instead of shallow agents with sophisticated orchestration.

Our QA agent receives a 100+ line file with instructions like “ run unit tests first, then integration tests, then E2E flows via Playwright, then Figma compliance checks with pixel diffs and computed CSS verification”. It checks for a playwright.config.ts before attempting E2E. It exports a QA matrix in Markdown format that downstream agents can parse. The downstream review agent understands the difference between an edge case failure and a core test breaking. It does not frivolously act on edge cases. All priority failures get two fix cycles with a human, followed by an escalation if things still aren’t right.

This isn’t prompt engineering. It’s domain expertise expressed as structured files, rubrics, and checklists injected into each agent’s context at runtime. The agent doesn’t reinvent QA from first principles. It gets a playbook.

| Dimension | Orchestration Frameworks | XC Agent Harness |

| Agent definition | System prompt + tools | Instruction files with rubrics, templates, checklists |

| Agent knowledge | LLM training data | Injected domain expertise per role |

| Per-agent capability | One LLM call per turn | Full Claude Code session with filesystem, tools, extended reasoning |

| Execution | In-process Python functions | Isolated OS processes in separate git worktrees |

| Orchestration | Sophisticated (graphs, handoffs, state machines) | Simple (three-layer pipeline, file-based communication) |

| Domain depth | Generic (you build everything) | Deep (judge patterns, pixel-diff QA, merge coordination) |

How It Works Step By Step

Layer 1: Pre-Processing. A FastAPI service receives a webhook from JIRA, it normalizes the ticket, and runs the Ticket Analyst Agent containing a call to the LLM with vision support. The analyst scores completeness against a rubric, generates acceptance criteria and test scenarios, detects conflicts with in-progress work, and decides whether to enrich, clarify, or even decompose the ticket into smaller subsets. For UI tickets, Figma URLs get extracted into design specs with frame PNGs for downstream visual verification.

Layer 2: Agent Team. The enriched ticket spawns a Team Lead agent that orchestrates the full pipeline. The Team Lead reads the enriched ticket and selects the pipeline mode (“simple” or “full” based on complexity), then spawns specialized sub-agents, each with a specific role and enforced tool restrictions:

A Planner decomposes the ticket into atomic units with a dependency graph

A Plan Reviewer critiques for gaps, missing edge cases, parallelization errors

Multiple Developers implement assigned units in parallel, each in an isolated git worktree

A Code Reviewer evaluates the merged result (read-only — cannot modify code)

A Judge re-reads items flagged by the Reviewer and scores each finding 0-100 to filter out false positives.

The QA runs unit tests, integration tests, E2E browser flows, and Figma compliance checks. Failures are routed back to the developer via Team Lead.

A Merge Coordinator integrates parallel branches in topological order

Importantly, our Team Lead never writes code itself. It reads each sub-agent's output, decides what to do next (re-route failures, escalate, or advance to the next phase), and logs every phase transition.

For small tickets the Lead skips the Planner and Merge Coordinator and runs a simplified pipeline with a single developer.

Lastly, the constraints within the pipeline aren’t suggestions in a system prompt. They’re tool-access restrictions. The code reviewer literally cannot write code. The QA agent cannot modify source files.

Layer 3: PR Review. GitHub webhooks trigger architecture review on new PRs, auto-fix agents for CI failures, and routing for human review. The architecture review looks at the PR in relation to the rest of the codebase. As a final step the human reviews a PR that contains thorough notes on the plan, implementation, self-review, QA-validation. It is very detailed.

Credit Where It’s Due

The orchestration frameworks solve real problems. LangGraph’s durable execution, meaning workflows that checkpoint and survive crashes is genuinely useful for production. CrewAI Flows has clean event-driven abstractions. OpenAI’s handoff model is exactly right for customer service routing. They’re excellent infrastructure but they are not a substitute for domain expertise. You wouldn't launch a restaurant by designing the perfect kitchen and then hiring chefs who've only ever read recipe blogs. Right?

Anthropic’s Validation

Anthropic published a detailed account of their own multi-agent research system in 2026. Their key finding: performance gains came from spreading reasoning across multiple independent context windows with good task decomposition. It does not come from sophisticated inter-agent protocols.

Their system uses Claude Opus as a lead with Sonnet sub-agents, hitting 90% improvement over single-agent performance. The architecture is what we adopted: decompose, work independently, synthesize. The value is in decomposition quality and per-agent capability.

This matches our experience. Our Judge agent—which re-reads actual code at each flagged line and scores whether a finding is real—adds more value than any orchestration sophistication. It directly prevents wasted developer cycles on phantom issues. Domain depth, not coordination depth.

When to Use What

Use an orchestration framework for general-purpose work (content generation, research, customer routing), when the LLM’s training data is sufficient per agent, when you need durable execution or complex routing, and when your team is able to build all agent intelligence from scratch.

Use a knowledge-first approach when agents need deep domain expertise and when quality gates matter more than coordination speed. For example it is typical that a ticket takes twice as long with our pipeline.

For software development, we believe knowledge-first is correct. Taking the time to build this pipeline is correct. The hard problem isn’t getting agents to talk to each other. We solved that two years ago. It’s making each agent good enough that the pipeline produces work a human approves on first review.

What We Measure

First-pass acceptance rate: PRs approved without revision. Target: >80%. Yes you read that correctly. Our harness targets human acceptance on first PR at greater than 80% !

Defect escape rate: Merged PRs with bugs found later. Target: <5%.

Self-review catch rate: Issues found by humans that AI review also flagged. Target: >85%.

When the catch rate exceeds 85% over a rolling 30-day window, we expand auto-merge scope. The human doesn’t leave the loop. Instead of looking at each and every PR, with a proven system the developer can move to reviewing a statistical sample. Graduated autonomy, not blind trust. This is a hard one but we are confident that it is possible for most of our customers to achieve this.

Finally, none of these metrics measure orchestration sophistication. They measure output quality. That’s the point. Quality first.

The XC Agent Harness is a tool built by XCentium’s GenAI Practice. It processes Jira tickets into reviewed, tested Pull Requests using Claude Code as the execution engine, with multi-client routing, parallel development in isolated worktrees, and visual design verification against Figma exports.

Sources

· Google Research & MIT. "Towards a Science of Scaling Agent Systems: When and Why Agent Systems Work." December 2025. https://research.google/blog/towards-a-science-of-scaling-agent-systems-when-and-why-agent-systems-work/

· Anthropic. "How We Built Our Multi-Agent Research System." 2026. https://www.anthropic.com/engineering/multi-agent-research-system

· CrewAI. "Introduction — CrewAI Documentation." https://docs.crewai.com/en/introduction

· LangChain. "LangChain and LangGraph Agent Frameworks Reach v1.0 Milestones." November 2025. https://blog.langchain.com/langchain-langgraph-1dot0/

· CrewAI GitHub Repository. https://github.com/crewAIInc/crewAI